Consulting, Microsoft Dynamics AX

Refreshing data in Dynamics AX made easy with the Test Data Transfer tool Part 1

When I was very young, I remember being absolutely obsessed with Santa Claus. I wanted my toys, but I also wanted to figure out how Santa was going to get in our house when we didn’t have a chimney. Was Santa going to build a chimney and then remove it? Was Santa going to knock on the door? Or was Santa going to just skip our house. My six year old mine just couldn’t make sense of it. So, I concatenated a plan. Instead of going to sleep as my parents had told me to, I would fake as if I was asleep and then get up and wait by the door listening for Santa. I remember listening and listening only to discover that my parents were the ones setting out the presents. I was so mad at Santa for having my parents do the work for him and not coming to my house.

Fast-Forward three decades later and I’m proud to say that Santa(okay, perhaps not him, Microsoft, but let’s just pretend) has come. He brought the Test Data Transfer tool and it is wonderful – just what every AX Technical Architect could wish for. See, one of the hardest things that a technical architect has to install and teach to a company is how to do a proper data move and code move. Dynamics AX has the different concepts like Model Id’s, which usually require some time to fully comprehend. And it can be really frustrating for experienced IT people to find themselves struggling with the concept. Consequently, the architect is often left having to install some serious discipline in data move procedures while also trying to ensure that the process is repeatable.

One of the most amazing things that Microsoft did was simplify this process by adding the Test Data Transfer tool. In one word, this tool is AMAZING.

There I was faced with my typical AX Technical Architect duties of getting the data move process down. I have a checklist of 30 something items that I use to do this manually, but usually I automate it. By using this tool, I was able to get my data refresh checklist down to 8 items!

So, this was a tool that Microsoft built to be able to update data within different environments – for example, all those Dev environments. It’s fast; it is quick, and it can be automated. But it has another advantage. It is AX aware. As long as you start with the best practice of updating data from the Production environment and letting it flow to everywhere else, it works. For the Model store or model database, that is a different scenario – and this has been a lot easier in AX 2012r2 since we have two separate databases.

Let’s see how to use this wonderful tool:

-

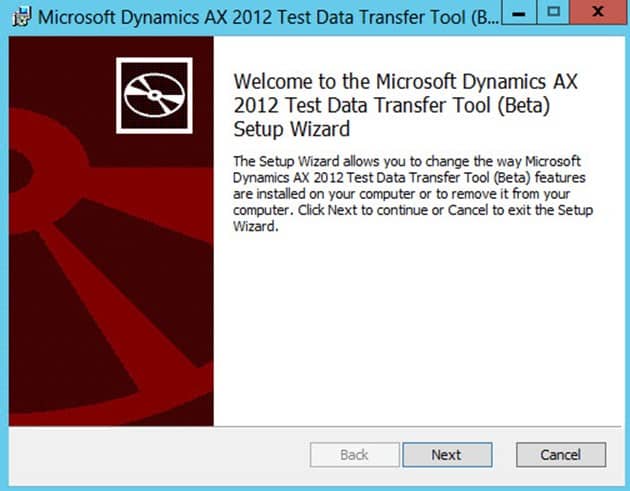

To install it is easy, but read the prereq’s such as having sql client on the machine and what not. You can check that in Microsoft documentation.

-

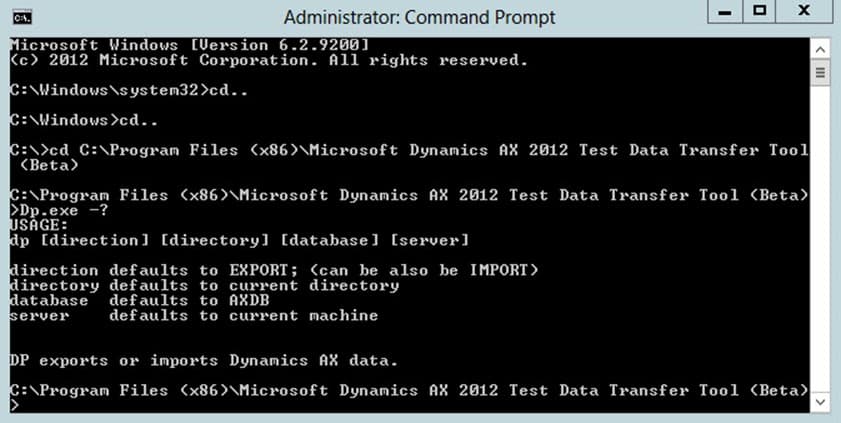

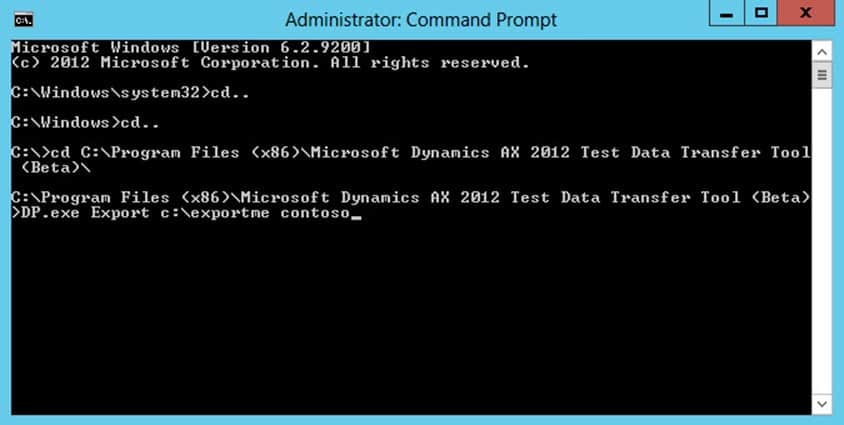

You can open the tool through the command prompt or do like I do and just call it from powershell. Here we will use the command prompt for the purposes of simplification. Remember to check the Microsoft documentation on Prerequisites for the machine that you choose for running this tool and run it as an administrator, making sure that the account has permissions to actually get the data from the SQL database. Here, I just changed the directory of the command prompt to where the DP.exe file was located and ran the “-?” to list all the arguments for running the tool. If you don’t fill in the arguments it will default to them. Now, let’s run the tool.

-

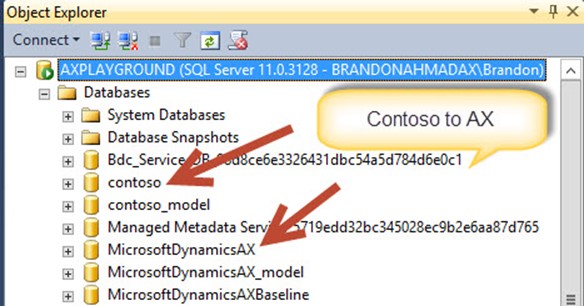

Here, I want you all to see that I’ve taken my newly custom built sample Dynamics AX machine with CU7, AX, SharePoint 2013, SQL 2012, and Team Foundation Server and I’ve imported the two sample databases inside of it. What I want to do is do a core data refresh from the sample database to my new database. I don’t want the same security or I don’t want the same environmental data. Also, I don’t want to have to worry about ModelID’s messing up my data. I just want to refresh the data from contoso to my lab machine environment MicrosoftDynamicsAX.

-

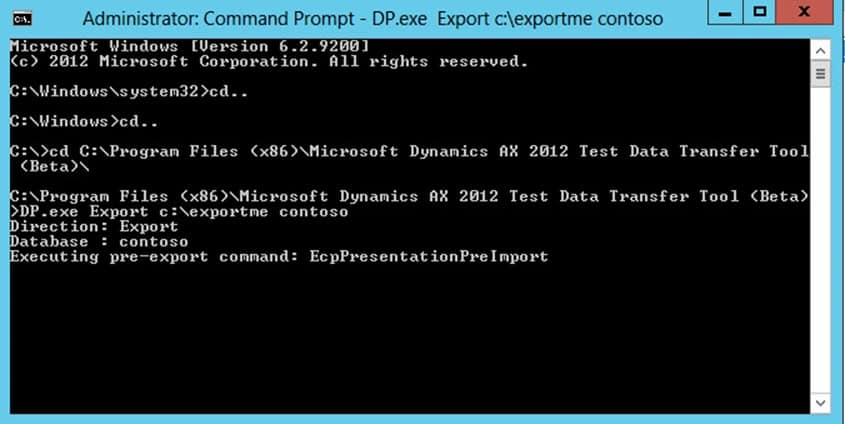

Now, it is a 2 step operation, but I like it. First you export the data to some location in a series of files. These files are nice because they retain the Model ID’s and other information. Plus, you can send these out to a file share so that you can easily deploy them to different development environments. Notice how I run the command below when the Test Data Transfer Tool is installed on the same SQL Server Database that I’m using for Exporting. Notice, how I don’t use switches. You just type the arguments out in a space delimited fashion. Here I don’t have to specify the database server because I’m running the tool from the server that the database is located on. So, I’m going to export the data into a folder called “c:exportme” which contains data from the “contoso” database.

-

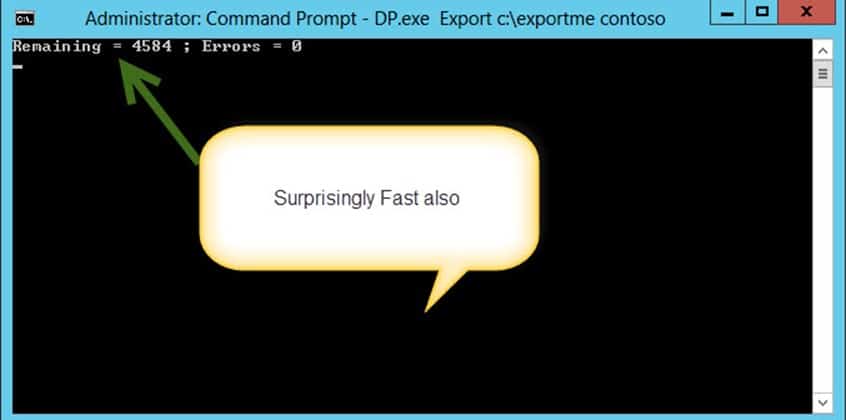

And for this one, just like at the slide. You’ll notice that the export is really fast. It took 7 minutes on my lab machine on a 18 gigabyte database to generate the export. Notice how it just eats up all those thousands of AX tables.

-

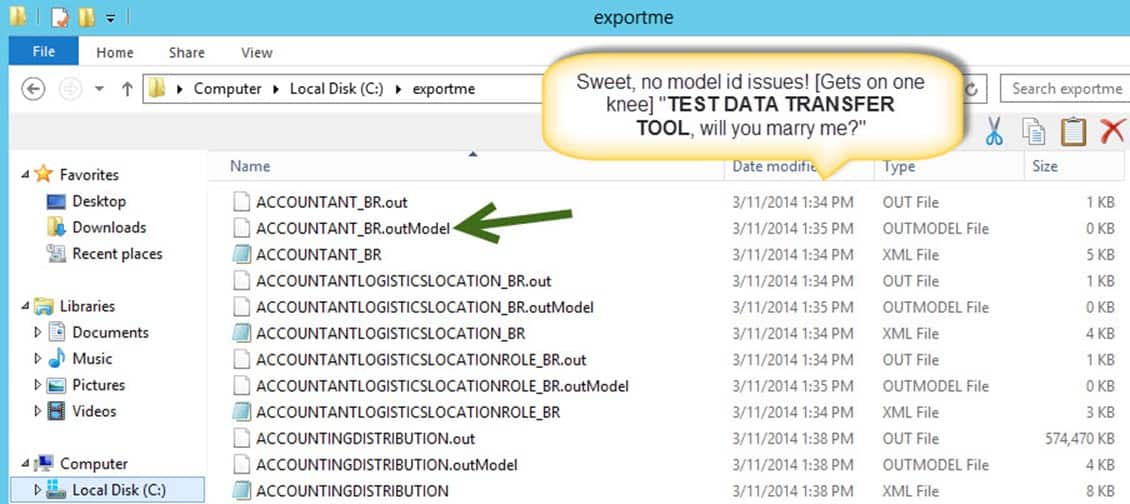

And our export is done. Now, some really cool things. First, notice that it generates 3 files per a table for every export. The *.out file contains the data, the *.xml file contains the columns that make up the table, and the .outmodel is where it gets special. This contains the modelid’s and what not so that things don’t get mixed up. Sweet!!!

-

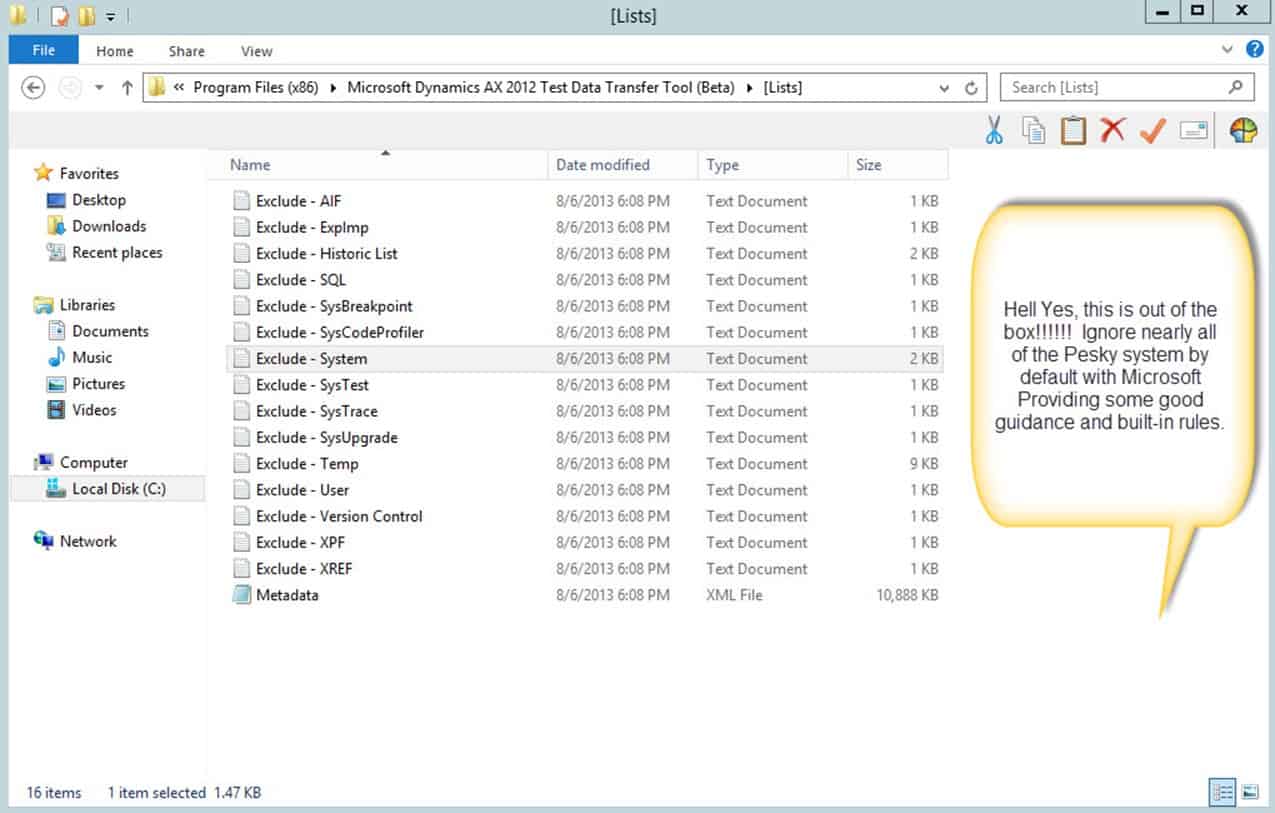

But it stays nice. Let’s go back to the directory installation where the tool is located. Oh, it is over.. I need to jump up and down right now. Notice that Microsoft has even gone so far as to exclude most of the variable data such as security user names, service break points, and a bunch of other system data for you. Now, this really makes it easier to export data to environments because you don’t have to keep overwriting the data!!! Ease of Use is so wonderful. Of course, you can customize this quite easily (see Microsoft documentation), but it is the fact that there is so much out of the box already there. The only thing you have to remember is to always do the export from what is or will be the production database. [Note: you are ignoring the pesky system tables which make data refresh take so long]

Good job Microsoft. On the next post, part 2, let’s import this so that we can see how that works.

13775

13775